The Bias You Don’t See Can Hurt Children

Have you heard the rumblings?

For some time now, people have complained about bias in algorithms. As it turns out, innocent math formulas have tremendous power to subvert outcomes based on predictive data. Algorithms can skew data results through bias. Sometimes this bias is intentional; sometimes it’s an accident.

Researchers and investigators have discovered bias in a variety of settings, including hiring practices, retails sales, marketing, and security systems. Law enforcement and intelligence agencies have consistently used algorithms to predict which individuals are most likely to commit crimes. Universities use algorithms to determine which students are best suited for college.

Bias is undeniably present in many real-life applications.

The data isn’t bad; it’s the process

Concerns about algorithmic bias can make people wonder if we shouldn’t do away with algorithms altogether. Getting rid of algorithms is like throwing out the baby with the bathwater.

The challenge lies in how technology addresses bias in the algorithms they use.

A very real problem exists among data analysts. They don’t realize that they are creating bias.

According to former CIA officer Yael Eisenstat, many of those employed at social media companies don’t understand how to measure or interpret bias. They do not understand the algorithms they are writing and using, and the programmers aren’t sure how their algorithms show bias.

It isn’t an error of commission. Most data analysts don’t set out to misinterpret facts. Instead, they commit an error of omission. They haven’t had the training they need, or they’ve forgotten an important step in the process. The unintentional mistakes become deeply embedded in a program. IT’s nearly impossible to ferret out and correct.

Bias can infiltrate algorithms even in the early stages of planning and programming. It’s likely that a scientist won’t notice the bias in an earlier algorithm, so it becomes perpetuated in later iterations.

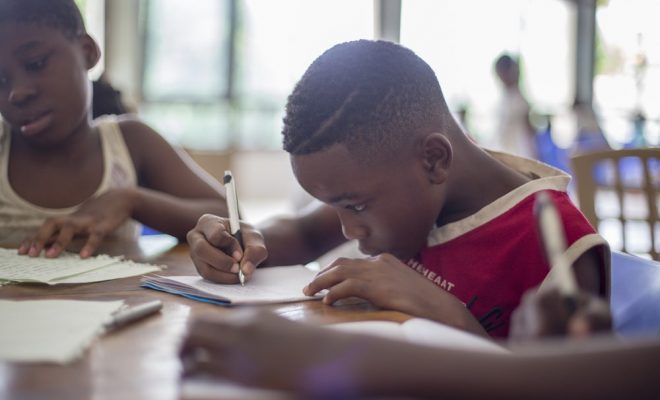

Through the eyes of children

Children have an amazing gift of being able to sift through bias — without elaborate mathematical formulas. Their insight helps them evaluate the actions of others, and they can discern when and where bias occurs.

You may have heard it pointed out in the simplest of terms as students cry, “That’s not fair!” Often they are right. An inequity exists, and they’re more than happy to point it out.

They might not know how it’s unfair; they just know it is.

Students become resentful of teacher bias in the classroom. They see when the teacher rewards the quietest and most polite students with inflated grades. They witness the preponderance of times the more active students get sent to the principal’s office for being hyper in the classroom, especially if those students are ethnically or racially different than the teacher.

Intuition, however, isn’t enough to prove a point or solve a problem. We must have undeniable facts, not intuition.

Teach students how to improve equity by learning how to write bias-free algorithms.

Teach students algorithms

Put a puzzle in front of your students, and you’ll find that most of them love the mental challenge of trying to figure out the solution.

In part, that’s why they love coding so much. Kids like to look for patterns and find ways to replicate them. Algorithms, with their logical sequences and orderly progressions, are the ultimate brain teaser for learners. They are useful tools. These formulas become even more attractive when students learn that they can use algorithms to identify and eliminate bias.

When we teach students how to write and solve algorithms, we have two teachable moments. The first is that bias is a real issue that must be addressed. Secondly, we have an opportunity to teach learners how to correct faulty algorithms that may lead to bias.

Eliminating bias in algorithms improves equality for everyone.